Here's a sketch of my vision of how to make something like the original PLoS Hubs vision (see

The demise of the @PLoS Biodiversity Hub: what lessons can we learn? for background). In the blog post explaining the vision behind PLoS Hubs (

Aggregating, tagging and connecting biodiversity studies), David Mindell wrote:

The Hub is a work in progress and its potential benefits are many. These include enhancing information exchange and databasing efforts by adding links to existing publications and adding semantic tagging of key terms such as species names, making that information easier to find in web searches. Increasing linkages and synthesizing of biodiversity data will allow better analyses of the causes and consequences of large scale biodiversity change, as well as better understanding of the ways in which humans can adapt to a changing world.

So, first up, some basic principles. The goal (only slightly tongue in cheek) is for the user to never want to leave the site. Put another way, I want something as engaging as Wikipedia, where almost all the links are to other Wikipedia pages (more on this below). This means object (publication, taxon name, DNA sequence, specimen, person, place) gets a "page". Everything is a first class citizen.

It seems to me that the fundamental problem faced by journals that "semantically enhance" their content by adding links is that those links go somewhere else (e.g., to a paper on another journal's site, to an external database). So, why would you do this? Why actively send people away?. Google can do this because you will always come back. Other sites don't have this luxury. In the case of citations (i.e., adding DOIs to the list of literature cited) I guess the tradeoff is that because all journals are in this together, you will receive traffic from your "rivals" if papers they publish papers that cite your content. So you are building a network that will (potentially) drive traffic to you (as well as send people away). You are building a network across which traffic can flow (i.e., the "citation graph").

If we add other sorts of links (say to GenBank, taxon databases, specimen databases like

GBIF, locality databases such as

Protected Planet, etc.) then that traffic has no obvious way of coming back. This also has a deeper consequence, those databases don't "know" about these links, so we loose the reverse citation information. For example, a semantically marked-up paper may know that it cites a sequence, but that sequence doesn't know that it has been cited. We can't build the equivalent citation graphs for data.

One way to tackle this is to bring the data "in house", and this is what

Pensoft are doing with their markup of ZooKeys. But they are basically creating mashups of external data sources (a bit like

iSpecies). We need to do better.

PrototypingOne way to make this more concrete is to think how a hub could be prototyped. Let's imagine we start with a platform like

Semantic MediaWiki. I've an on-again/off-again relationship with Semantic MediaWiki, it's powerful but basically a huge, ugly hack on top off a huge piece of software that wasn't designed for this purpose, but it's a way to conceptualise what can be done. So, I'm not arguing that Semantic MediaWiki is how to do this for real (trust me, I'm really, really not), but that it's a way to explore the idea of a hub.

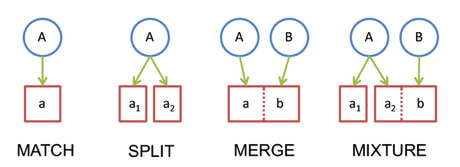

OK, let's say we start with a paper, say an Open Access paper from PLoS. We parse the XML, extract every entity that matters (authors, cited articles, GenBank accessions, taxon names, georeferenced localities, specimen codes, chemical compounds, etc.) and then create pages for each entity (including the article itself). Each page has a URL that uses an external id (where available) as a "slug" (e.g., the URL for the page for a GenBank sequence includes the accession number).

Now we have exploded the article into a set of pages, which are linked to the source article (we can use Semantic MediaWiki to specify the type of those links), and each entity "knows" that it has a relationship with that article.

Then we set about populating each page. The article page is trivial, just reformat the text. For other entities we construct pages using data from external databases wherever possible (e.g., metadata about a sequence from GenBank).

So far we have just one article. But that article is linked to a bunch of other articles (those that it cites), and there may be other less direct links (e.g., GenBank sequences are linked to the article that publishes them, taxonomic names may be linked to articles that publish the name, etc.). We could add all these articles to a queue and process each article in the same way. In a sense we are now crawling a graph that includes the citation graph, but it includes links the citation graph misses (such as articles that cite the same data, see

Enhanced display of scientific articles using extended metadata for more on this).

The first hurdle we hit will be that many articles are not open access, in which case they can't be exploded into full text and associated entities. But that's OK, we can create article pages that simply display the article metadata, and link(s) to articles in the citation graph. Furthermore, we can get some data citation links for closed access articles, e.g. from GenBank.

So now we let the crawler loose. We could feed it a bunch of articles to start with (e.g., those in the original Hub (if that list still exists), those from various RSS feeds (e.g., PLoS, ZooKeys, BMC, etc.).

Entry pointsUsers can enter the hub in various ways. Via an article would be the traditional way (e.g., via a link from the article itself on the PLoS site). But let's imagine we are interested in a particular organism, such as Macaroni Penguins. PLoS has an interesting article on their feeding [Studying Seabird Diet through Genetic Analysis of Faeces: A Case Study on Macaroni Penguins (Eudyptes chrysolophus)

doi:10.1371/journal.pone.0000831]. This article includes sequence data for prey items. If we enhance this article we build connections between the bird and its prey. In the simple level, the hub page for the crustacea and fish it feed on would include citations to this article, enabling people to follow that connection (with more sophistication the nature of the relationship could also be specified). This article refers to a specific locality and penguin colony, which is in a marine reserve (

Heard Island and McDonald Islands Marine Reserve). Extract these entities, and other papers relevant to this are would be linked as they are added (e.g., using a geospatial query). Hence people interested in what we now about the biology of organisms in specific localities would dive in via the name (or URL) of the locality.

SummaryThe core idea here is taking an article, exploding it, and treating every element as a first class citizen of the resulting database. It is emphatically not about a nice way to display a bunch of articles, it's not a "collection", and articles aren't privileged. Nor are articles from any particular publisher privileged. One consequence of this is that it may not appeal to an individual publisher because it's not about making a particular publisher's content look better than another's (although having Open Access content in XML makes it much easier to play this game).

The real goal of an approach like this is to end up with a database (or "knowledgebase") that is much richer than simply a database of articles (or sequences, or specimens), and which moves from being a platform for repurposing article text to a platform for facilitating discovering.

In a previous post (Learning from eLife: GitHub as an article repository) I discussed the advantages of an Open Access journal putting its article XML in a version-controlled repository like GitHub. In response to that post Pensoft (the publisher of ZooKeys) did exactly that, and the XML is available at https://github.com/pensoft/ZooKeys-xml.

In a previous post (Learning from eLife: GitHub as an article repository) I discussed the advantages of an Open Access journal putting its article XML in a version-controlled repository like GitHub. In response to that post Pensoft (the publisher of ZooKeys) did exactly that, and the XML is available at https://github.com/pensoft/ZooKeys-xml. Since 2009 I've been running a service that takes posts to the EvolDir mailing list and sends them to a Twitter stream at

Since 2009 I've been running a service that takes posts to the EvolDir mailing list and sends them to a Twitter stream at

Quick note to highlight the following publication:

Quick note to highlight the following publication: